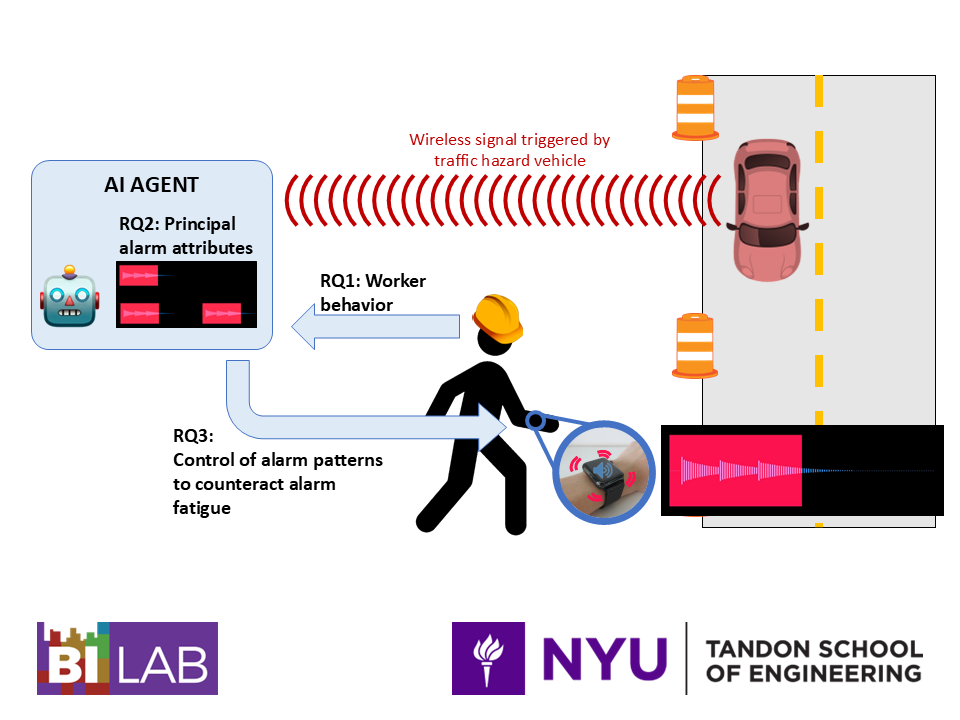

The most recent statistics in the United States are showing an increasing rate of construction worker fatalities due to vehicles crashing into roadway work zones, peaking at over 900 deaths in 2021. Research in transportation safety has mainly investigated these accidents in relation to the behavior and awareness of vehicle motorists, leaving the role of roadway workers’ behavior not well understood. Meanwhile, recent commercial roadway work zone intrusion alert (WZIA) systems have been deployed to raise alarms (e.g., sounds, flashing lights) to warn workers of traffic hazards (e.g., speeding car) and prevent accidents, but suffer from slow worker response times the farther away they stand from a WZIA’s stationary alarm source (e.g., loudspeaker/lamp). Wearable warning devices (e.g., smartwatch) have the potential to address the limitations of current WZIA systems by ensuring workers always perceive alarms on their bodies. As wearable warning devices become adopted in roadway work zones, workers may still experience alarm fatigue due to repeated exposure to alarms of constant attribute-values, reducing their responsiveness towards traffic hazards over time. To address these challenges, prior research at BILAB initiated an integrated traffic and virtual reality (VR) platform that simulates realistic roadway work zones with surrounding traffic flow. Utilizing the platform to collect data on human roadway workers’ behaviors and alarm reactions, the major contributions of this dissertation include: (1) a deep learning model that can accurately predict roadway workers’ trajectory based on their construction activities and proximity to traffic vehicles, (2) a identified list of alarm attributes (e.g., modality) and values (e.g., “haptics and sounds”) that informs the design of future wearable warning devices for roadway worker safety applications, and (3) a reinforcement learning-based intelligent control system for fine-tuning alarm attribute-values to minimize alarm fatigue in worker reactions.

The most recent statistics in the United States are showing an increasing rate of construction worker fatalities due to vehicles crashing into roadway work zones, peaking at over 900 deaths in 2021. Research in transportation safety has mainly investigated these accidents in relation to the behavior and awareness of vehicle motorists, leaving the role of roadway workers’ behavior not well understood. Meanwhile, recent commercial roadway work zone intrusion alert (WZIA) systems have been deployed to raise alarms (e.g., sounds, flashing lights) to warn workers of traffic hazards (e.g., speeding car) and prevent accidents, but suffer from slow worker response times the farther away they stand from a WZIA’s stationary alarm source (e.g., loudspeaker/lamp). Wearable warning devices (e.g., smartwatch) have the potential to address the limitations of current WZIA systems by ensuring workers always perceive alarms on their bodies. As wearable warning devices become adopted in roadway work zones, workers may still experience alarm fatigue due to repeated exposure to alarms of constant attribute-values, reducing their responsiveness towards traffic hazards over time. To address these challenges, prior research at BILAB initiated an integrated traffic and virtual reality (VR) platform that simulates realistic roadway work zones with surrounding traffic flow. Utilizing the platform to collect data on human roadway workers’ behaviors and alarm reactions, the major contributions of this dissertation include: (1) a deep learning model that can accurately predict roadway workers’ trajectory based on their construction activities and proximity to traffic vehicles, (2) a identified list of alarm attributes (e.g., modality) and values (e.g., “haptics and sounds”) that informs the design of future wearable warning devices for roadway worker safety applications, and (3) a reinforcement learning-based intelligent control system for fine-tuning alarm attribute-values to minimize alarm fatigue in worker reactions.

Lu, D., & Ergan, S. (2025). Principal attributes of wearable warning alarms to promote roadway worker safety. Advanced Engineering Informatics, 67, 103481.

Lu, D., Ergan, S., & Ozbay, K. (2025). Reinforcement learning-based optimal control of wearable alarms for consistent roadway workers’ reactions to traffic hazards. Journal of Transportation Safety & Security, 1-25.

Lordianto, B., Lu, D., Ho, W., & Ergan, S. (2024). Hapti-met: A construction helmet with directional haptic feedback for roadway worker safety. 453-462. In: EG-ICE 2024 International Conference on Intelligent Computing in Engineering, Vigo, Spain, July, 2024.

Lu, D., & Ergan, S. (2023). Predicting roadway workers’ safety behaviour in short-term work zones. In: EG-ICE 2023 International Conference on Intelligent Computing in Engineering, London, United Kingdom, July, 2023.

Qin, J., Lu, D., & Ergan, S. (2022). Towards increased situational awareness at unstructured work zones: Analysis of worker behavioral data captured in VR-based micro traffic simulations. 63-77. In: International Conference on Computing in Civil and Building Engineering, Cape Town, South Africa, October, 2022.

Zou, Z., Bernardes, S. D., Zuo, F., Ergan, S., and Ozbay, K. (2022) Developing an integrated platform to enable hardware-in-the-loop for synchronous VR, traffic simulation and sensor interactions, Advanced Engineering Informatics, 51, 101476